From monitoring palm oil plantations to cutting energy use in data centres, Ellen R Delisio looks at how companies used IT to get a shop-floor view of their operations in 2016

While businesses have long used big data analytics to improve marketing and sales, 2016 saw an increasing use of big data analysis to manage issues in supply chains.

Data is growing globally at a rate of about 59% a year, and arriving faster, in larger quantities and covering more topics than ever before. Aiding the data flow is an anticipated growth in the number of satellites, now that Nasa and a handful of countries are not the only ones capable of launching them.

Big data has also become less exotic. Corporations are exploring how to use it to create products and services and are developing resources they need to apply it. Walmart, for example, collects and manages 2.5 petabytes, or 10 bytes, of data within an hour of a customer’s purchases.

In 2016, more companies have been focusing on putting big data to work with practices proven to generate return on investment through gains in productivity and revenue as well as decreased risk.

A survey of almost 1,200 professionals worldwide conducted by DNV GL this year indicated that 65% see big data playing a major role in the future of their companies and 76% expect to maintain or increase investments in big data.

But with these opportunities come sticky issues, such as ensuring privacy and updating regulations, including the UK’s 1998 Data Protection Act. In October, the UK government launched the All-Party Parliamentary Group on Data Analytics, with the aim of highlighting the opportunities big data offers, and keeping MPs apprised of developments, applications and issues such as securing sensitive information. The group plans to link the public and private sectors in an effort to craft more effective big data policies and research the best ways to develop data analysis skills.

Data sharing

In 2016, the non-profit sector faced its own obstacles to using big data. The NGO Every Action reported that while 87% of non-profit professionals see data as valuable to operations at their organisation, just 6% think the data is being used effectively. Building capacity to connect research with real-time operations is a goal for organisations such as Direct Relief, which supports healthcare providers and facilities. Part of the problem is that NGOs focus on one piece of the world; they deal with a niche problem and need a much larger data set to use big data effectively, said Andrew Schroeder, Direct Relief’s director of research and analysis. “So far it has been easier to do data work and response work in separate buckets; to connect those pieces together is a continuous challenge.”

The sector’s persistent data-sharing problem makes it hard to look at a bigger picture. “More of the NGO data systems should be automatic and automatically shared, with elective privacy based on certain information,” Schroeder said. “You don’t get to a big data view until you can share as much as possible right away.”

The most unique aspect of big data is its ability to provide immediate information for decision-making. As we reported in pointed out in Ethical Corporation’s July, the influx of timely facts and figures is expected to alter, or even eliminate, annual sustainability reports in future. John Hsu, sustainability reporting data specialist at the Carbon Trust, said: “With big data, it is potentially possible to analyse every facet of a company’s sustainability performance in real time, which gives companies the opportunity not only to react in real time, but also to predict and pre-emptively counteract potential sustainability risks across the full breadth of its business, before it even occurs."

Disruptive technologies

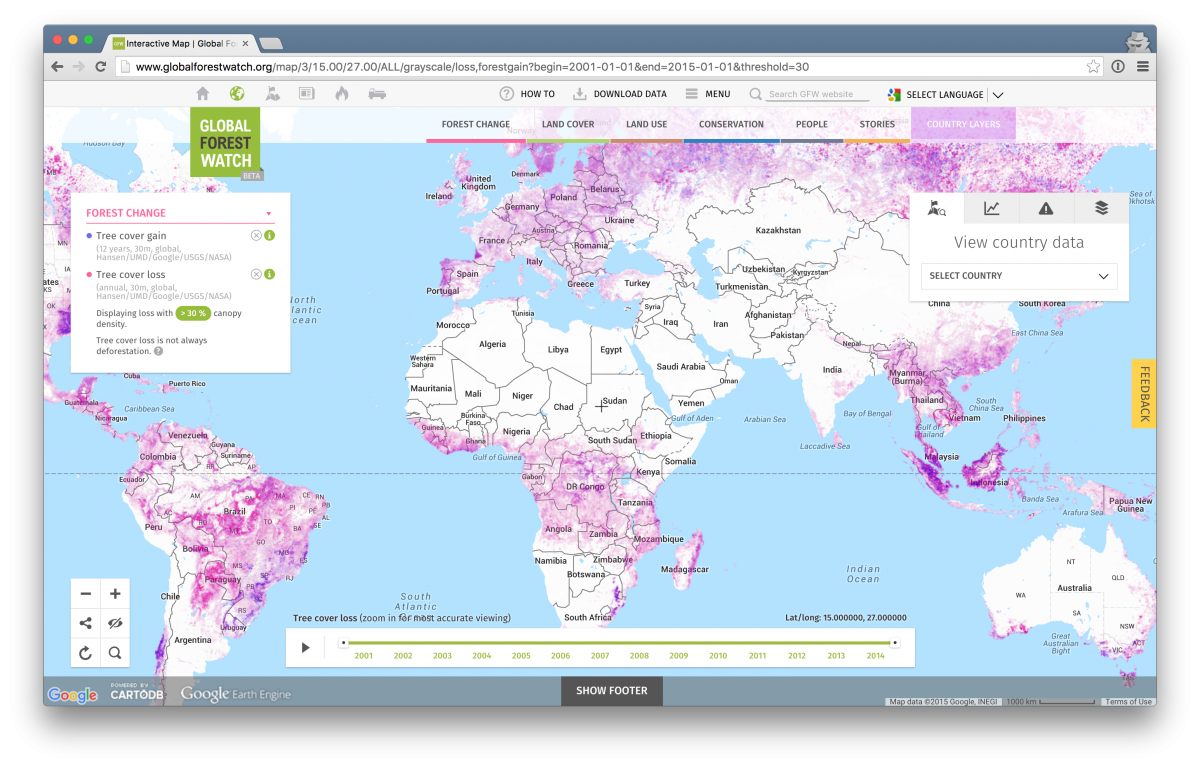

This year we saw big data analytics begin to have a major impact on supply chain management in the palm oil industry, with the launch of Global Forest Watch’s forest tracking system, which monitors deforestation worldwide. It can pinpoint where trees and peat have been cleared and burned to make way for palm. Information is available within days, instead of in a written report a year later, allowing companies to make rapid decisions. Users include NGOs and commodities, such as palm oils, soy and cattle. Global Forest Watch’s palm oil mill database now includes 1,000 mills. Companies could be buying from multiple mills, but the real-time data allows them to identify which ones are in violation of sustainability agreements by clearing more forests or burning peat.

Another source of big data, not without controversy, is artificial intelligence (AI). In November we reported on the claim by Mustafa Suleyman, co-founder of the UK AI start-up, DeepMind that AI’s problem-solving power could be harnessed to address sustainability issues such as climate change, food waste and water shortages. The company, which was bought by Google, was able to use its algorithms to reduce energy usage by Google’s data centres by 15%. Days later DeepMind signed a five-year contract with the UK’s National Health Service to provide an app that will allow doctors and nurses to identify patterns in patients’ blood test data and take potentially life-saving action.

John Elkington, chief pollinator at Volans, told the same conference that disruptive new technologies such as AI, machine-learning, and drones hold amazing potential for sustainability, but they also hold perils. He gave the example of the recent denial of service attacks, using dumb devices in homes to bring down much of America’s internet. Elkington said he found it troubling that “precariously few” sustainability professionals are engaged with disruptive new technologies at this formative moment, focusing on old technologies, such as oil and gas and chemicals.

Digital revolution

Tara Norton, managing director of Business for Social Responsibility, said 2016 has seen numerous existing IT systems providers, along with emerging technology companies, offering services to help companies manage big data and their supply chains. As demand grows, so will the availability of programmes to analyse data more quickly. But she said challenges remain in assessing big data deep into some supply chains, because smallholders, day labourers, and other less organised or less networked participants make digitalising information difficult.

Another key trend, said Norton, is that companies are actively looking for ways to replace at least a portion of their supply chain audits with other forms of real-time data, including data on worker voices, but also looking for ways to harness other data. “This seems to be mostly conversation at the moment.”

One thing UK companies will be watching next year is the implications of the shock referendum result in June for data protection regulation. As we reported here, in May the European Commission published details of its new rules governing data protection, which will apply from May 2018, and cover issues around consent, notification, privacy by design, the right to erasure, data portability, and liability for data processors. The new EU rules will increase fines for infringement from relatively low levels to maximums of up to €20,000,000 or 4% of worldwide turnover, depending on the offence. However, with the government looking to trigger Article 50 to begin negotiations to leave the EU next year, data regulation is just one of many important areas that have been thrown into uncertainty.

Big data and the supply chain is number 9 in Ethical Corporation's 10 issues that shaped 2016 series. For the rest of the series, click here